TL;DR – Get Ready For Medical Advice From Google’s AI Overviews…

Unreliable, full of bugs, and wrong 60% of the time. Yep, that’s Google AI Overviews in a nutshell. And now Google thinks it’s ready to dole out medical advice…

- Google is expanding AI Overviews to include medical and health-related advice.

- A new “What People Suggest” feature will surface advice from everyday users across the web.

- Past performance shows AI Overviews have frequently served up misinformation, sometimes dangerously so.

- Experts and researchers remain concerned about the accuracy, safety, and credibility of Google’s AI health push.

- Google’s health division was shuttered in 2021 — and AI has yet to prove it’s a suitable replacement for actual medical professionals.

Google’s AI Overviews have a history of serving up bizarre, dangerous, and just plain wrong answers — and now, the same AI that once suggested putting glue on your pizza is being entrusted with health advice.

Yes, seriously.

From Pizza Glue to Health Recommendations

In a recent blog post, Google’s Chief Health Officer, Karen DeSalvo, announced that AI Overviews will soon “cover thousands more health topics,” thanks to updates to its Gemini AI models and Google’s so-called “best-in-class quality and ranking systems.”

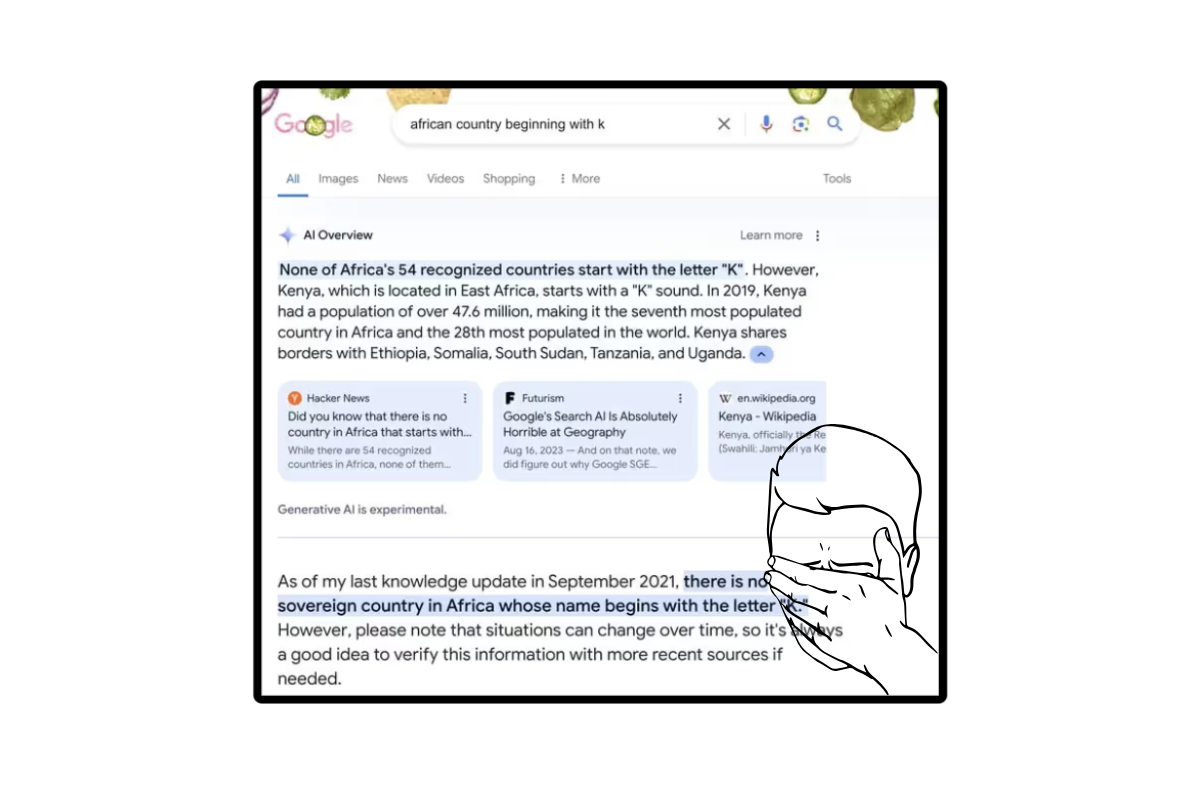

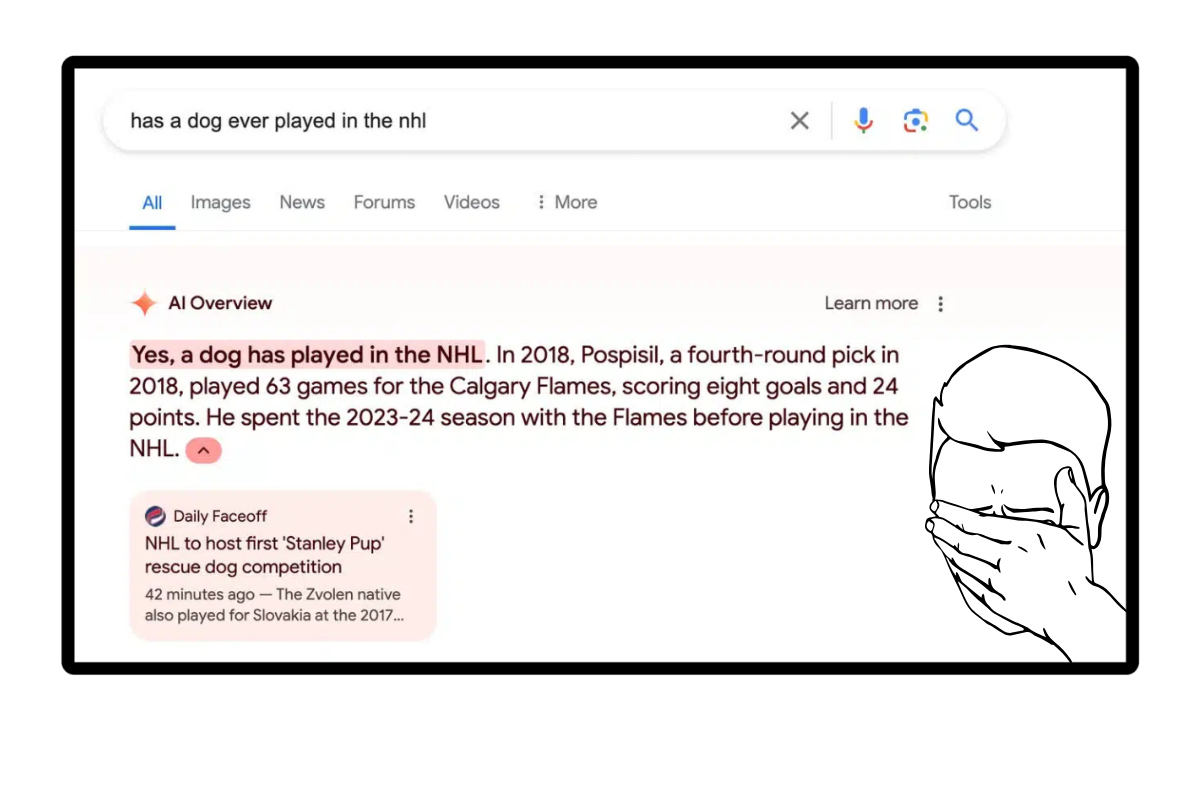

That might sound reassuring — until you remember this is the same system that once confidently claimed:

- You should bathe with a toaster,

- Baby elephants fit in the palm of your hand,

- And that glue improves the flavor and stickiness of pizza sauce (a response sourced from a Reddit comment written by a child, by the way).

So yeah… forgive us if we’re a little skeptical.

“What People Suggest”: Medical Advice From Random Strangers?

The most concerning part of Google’s new update is a feature called “What People Suggest.” It’s designed to organize health tips shared by regular users across the web — which sounds a lot like crowdsourcing medical advice from internet forums and TikTok comments.

Google says it has conducted “rigorous testing and clinical evaluations” of this feature and insists it appears alongside content from authoritative sources.

But when Futurism tried to find it on mobile, it wasn’t even live yet — so it’s unclear how exactly it’s being implemented or moderated.

“Our research shows that people often value hearing from others who are navigating similar health questions,” Google said in a statement.

(Translation: Enjoy your crowdsourced medical advice from a yoga mom on Quora.)

Track Record: AI Overviews Get Basic Stuff Wrong — A Lot

If you’re wondering how reliable any of this will be, a study from Columbia’s Tow Center for Digital Journalism recently found that Google’s Gemini chatbot got basic questions wrong 60% of the time.

Sixty. Percent.

That’s not a margin of error — that’s a coin flip with a side of hallucination.

And it’s not like this is a one-off issue. AI Overviews have routinely misrepresented facts, pulled information from satire sites like The Onion, and used obscure Reddit comments as sources — all while appearing at the very top of your search results, above legitimate content from actual health professionals.

Google’s AI Health History Isn’t Exactly Reassuring

Let’s not forget: Google already tried to “revolutionize” health care once, through its now-defunct health division, which was shuttered back in 2021.

Most of the people who actually had deep medical expertise at Google were either laid off or reassigned. That legacy doesn’t inspire confidence — and even tech analysts agree.

“If Google’s ambition is to disrupt the healthcare industry, nobody in big tech has succeeded yet,” said Rajiv Leventhal, senior analyst at Emarketer, speaking to Bloomberg.

It’s a fair point. Health care is complex. Doctors go through years of training for a reason — and they don’t source their diagnoses from subreddit threads or parody websites.

So, Should You Trust Google’s AI Health Advice?

Short answer: no, not entirely. At least, not yet.

Google says it has guardrails and safety policies in place to prevent low-quality responses, but even the company admits that “there may not be a lot of high-quality web content available” for some queries — meaning the AI will still make stuff up when it can’t find reliable info.

In other words: the system will guess. And when it comes to medical advice, guessing isn’t good enough.

The Bigger Picture

This move is part of a larger trend at Google: using AI to shortcut content creation and deliver faster answers — even if the information isn’t always accurate, sourced properly, or safe.

It raises big questions not just about health misinformation, but about the future of search itself.

If AI Overviews continue replacing quality content with questionable summaries, we may end up with a search engine that’s fast, but fundamentally untrustworthy.

#Trust #Overviews #Health #Good #Luck

source: https://www.knowyourmobile.com/news/google/google-ai-overviews-medical-advice/