It might be a cliché to say, but the Internet really has been taken by storm by the new image generation capabilities of ChatGPT’s 4o model. Everywhere you look, friends, acquaintances, and friends-of-friends are sharing AI-edited photos that resemble the dreamy and painterly aesthetic of Studio Ghibli films: The Japanese animation studio, co-founded by Hayao Miyazaki, is known for its rich fantasy worlds and strong anti-war themes. So much so that “Ghiblifying”, “Ghiblify”, or “Ghibli-fication” is used as a verb to describe the phenomenon.

So, it is obvious that this sweeping trend would generate significant controversy and divide the public into two distinct camps. The first, a heterogeneous group of AI enthusiasts, opportunists/grifters, and fence sitters: Those who have been monitoring the development of Generative AI, weighing its potential benefits and harms, and waiting for the right moment to engage.

The second group is of people who abhor AI art or anything related to Generative AI. To these individuals, it’s a soulless imitation and slop; it holds no value, and they dismiss the emotional sincerity of users uploading altered images of loved ones, even if motivated by nostalgia or an appreciation for shared cultural references. To them, it’s amoral in every way.

They believe this particular technology has no net benefit, comes at a significant environmental cost, will kill creativity and is built upon uncompensated labour and intellectual property violations. As a result, many within this camp are actively calling for more robust copyright protections and stricter regulation of AI technologies.

Also Read | AI in India is trapped in culture wars

This second camp, however, may be ensnared in a rhetorical and ideological paradox. Their stance is shaped not only by a kind of selective historical amnesia but also by dominant narratives circulating within American techno-cultural discourse. Their reactionary posture, frequently accompanied by a strong sense of moral certainty, often becomes a performative act, incentivised by online cultures that reward visible dissent and contrarianism.

And we are all guilty of it, intentionally or unintentionally. Social media platforms reward these actions.

Consider, for instance, that many within this group likely regard Aaron Swartz—the co-creator of the web feed format RSS and an open-access advocate—as a figure of moral clarity. Swartz was arrested in 2011 for downloading a large number of academic journal articles from JSTOR with the goal of making them publicly accessible. His death by suicide is widely seen as the result of overzealous prosecution, and it sparked a movement advocating the free circulation of knowledge. I share that view, i.e., knowledge should be free, open, and not monopolised by paywalls or elite institutions.

Yet there is a tension when this same group demands stricter copyright laws solely in response to the proliferation of AI-generated art. Such calls, while perhaps well-intentioned, risk overpowering the very systems they seek to dismantle.

The case of Nintendo

Historically, expansive copyright regimes have rarely served the interests of smaller creators; rather, they tend to consolidate power in the hands of large media conglomerates. In attempting to curb the proliferation of generative AI, this group may inadvertently reinforce the legal and economic structures they otherwise critique. Essentially, they are walking into a trap over moral panic.

Take, for example, the case of Nintendo. In 2018, the company filed a lawsuit against the popular ROM-hosting websites LoveROMS.com and LoveRETRO.co, accusing them of “brazen and mass-scale infringement” of its intellectual property rights. It is pertinent to note that this included ROMs of games that were no longer commercially available. The case was ultimately settled out of court, with the owners agreeing to pay $12 million in damages (a symbolic figure) and both websites were permanently taken offline and replaced with legal takedown notices. The lawsuit, as intended, sent a chilling message across ROM preservation and emulation communities, reinforcing the idea that even archival or non-commercial distribution of older games would not be tolerated.

Moreover, until very recently, Apple prohibited emulator apps on the App Store, which is widely believed to be due to concerns over potential copyright violations and lawsuits by Nintendo. It was only after the European Union’s Digital Markets Act (DMA) came into effect, requiring Apple to allow third-party app stores on iOS devices, that Apple began to relax its restrictions. Within these alternative app stores, emulators quickly gained traction, which prompted Apple to revise its policies to stay competitive.

In September 2022, the European Commission announced that the world’s largest digital companies—designated as “gatekeepers” by the EU—would be required to follow a set of strict rules under the landmark Digital Markets Act (DMA). On March 25, 2024, the EU launched its first-ever investigations under the DMA, targeting Apple, Google’s parent company Alphabet, and Meta.

| Photo Credit:

Kenzo Tribouillard/AFP

Calls for stronger copyright enforcement, especially as a response to generative AI or digital reproduction, risk entrenching a legal and corporate environment where no one but the largest rights-holders benefit. Rather than protecting individual creators, such precedents often end up curbing preservation efforts, stifling creativity, and reinforcing monopolistic control over cultural memory. To put it bluntly, it’s a counterproductive exercise.

The environmental impact of generative AI is another recurring concern among critics, especially as large-scale models have substantial energy demands. However, to evaluate such concerns, one needs to put them in the broader context of the history of computing and contemporary developments in the field of AI.

In 2024, Geoffrey Hinton and John J. Hopfield, won the Nobel Prize in Physics “for foundational discoveries and inventions that enable machine learning with artificial neural networks”. Hinton, in particular, has long been referred to as the “Godfather of AI” for his pivotal role in reviving neural networks as a viable framework for machine learning

However, neural networks—which paved the way for today’s cutting-edge AI systems—were not always held in such high regard. From the late 1950s through the early 1990s, the dominant approach in AI research was Symbolic AI, which focused on rule-based, logic-driven systems known as expert systems. These “hand-coded” approaches were more interpretable and aligned better with the computational limits of the time. Neural networks, by contrast, were widely regarded as inefficient and speculative.

They suffered from theoretical bottlenecks such as the XOR problem, famously identified by Marvin Minsky and Seymour Papert in their 1969 book Perceptrons. They argued that the neural network models of the time, particularly single-layer perceptrons, were fundamentally limited. More broadly, Minsky and his contemporaries contended that such models, while effective for simple tasks, lacked the capacity to scale to more complex cognitive functions, plus there were infrastructural issues such as the lack of computational power. These challenges presumably led to declining interest and funding in neural networks, contributing to what is now known as the first AI winter.

Political backing

The broader takeaway is not merely about technical progress or stagnation, but of how quickly capital, state interest, and institutional focus can coalesce around a single paradigm and how abruptly that support can vanish when expectations are not met. Today, with the dramatic ascent of Large Language Models (LLMs) and generative image tools, we are witnessing a similar influx of global capital and political backing. The prevailing narrative casts this as a necessary path toward Artificial General Intelligence (AGI) or even Superintelligence (there is no unanimous definition of these terms).

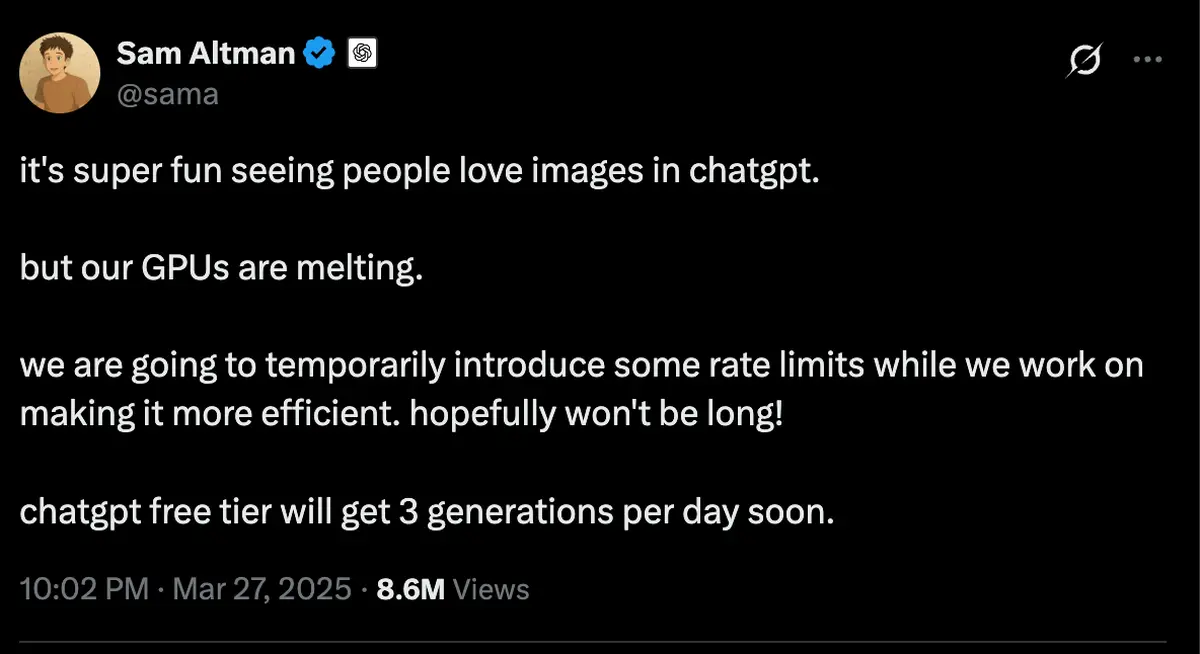

Against this backdrop, responding to the environmental and financial costs of generative AI is not only an ethical necessity but an economic imperative. The responsibility for improving efficiency lies not just with frontier AI labs such as OpenAI but extends across the broader ecosystem: GPU manufacturers, cloud infrastructure providers, and semiconductor companies included. Indications of infrastructural strain are already visible: multiple posts by Sam Altman on X, along with the imposition of rate limits on image generation models of OpenAI, suggest that current demand is exceeding the operational capacity of available hardware.

If these systems continue to exhibit high energy demands and prohibitive operational costs, the implications for the industry will be significant. Investment capital, by its nature, seeks efficiency and return. Should frontier AI companies fail to reduce costs or demonstrate scalable paths to monetisation, they risk a loss of investor confidence. As seen in earlier technological cycles, such conditions can quickly evaporate funding as well as institutional faith.

A post by Sam Altman, CEO of OpenAI, on X, announcing temporary rate limits on image generation in ChatGPT due to high demand and GPU strain.

| Photo Credit:

Sam Altman on X

Another historical precedent to look at is the IBM 350 RAMAC, introduced in 1957 as the first commercial hard disk drive, which consumed approximately 500 watts of power while offering a storage capacity of a mere 3.75 megabytes. Although revolutionary for its time, it was emblematic of the inefficiencies that characterise early-stage technologies. Over the ensuing decades, advances in engineering and the influence of market competition led to dramatic improvements in storage density, energy efficiency, and cost-effectiveness.

Again, this historical trajectory offers a useful parallel for understanding the future of generative AI. While current models may be energy-intensive and expensive to operate, such conditions do not last forever. If the history of computing is any indication, the viability of general-purpose AI will ultimately depend on its ability to adapt to material constraints and economic pressures. In this sense, market forces are likely to play the decisive role in shaping the long-term fate of the field.

This is not to suggest that generative AI should be exempt from critique simply because of its potential. On the contrary, critical engagement is essential, particularly given that the story of this technology is not only one of computational efficiency but also one of labour, displacement, and inequality. However, such criticism must be grounded in the political, economic, and institutional realities of the present moment.

When nations such as France and India co-chair global initiatives such as the AI Action Summit, and when the Vice President of the US publicly asserts that American AI must remain the global “gold standard”—calling simultaneously for innovation and regulatory leniency—it becomes clear that the Overton window has shifted. The discourse has moved decisively in favour of rapid adoption, and so too has the capital. The vast majority of global investment, public and private, is now flowing into generative AI and adjacent fields.

Grey areas between moral extremes

In this context, appeals to moral absolutism risk losing political efficacy if not accompanied by practical engagement. Policy cannot rely solely on virtue signalling: while such positioning may carry symbolic weight, they often lack durability in the face of complex technological and economic realities. Moreover, human behaviour rarely operates in absolutes. It is conventional wisdom that people tend to function in the grey areas between moral extremes. The more pressing question is no longer whether generative AI should exist but how it can be responsibly shaped, governed, and integrated into public life.

Also Read | AI’s technological revolution: Promised land or a pipe dream?

How might generative AI be thoughtfully incorporated into education systems? What safeguards are needed for young learners who may come to rely on AI despite having weak foundational skills? Anecdotal signs of this shift are already visible: Gen Z reportedly refers to the em dash as the “ChatGPT hyphen”, i.e., the erosion of basic vocabulary and writing conventions is already underway. And perhaps most urgently, how can societies anticipate and mitigate the labour disruptions these technologies are likely to intensify?

Whether one embraces or resists these developments, it is clear that generative AI has entered a phase of mass adoption, and the recent “Ghibli-fication” trend is a case in point. The imperative now is to proceed with strategic foresight and institutional care. Market forces have already accelerated this transition: the real challenge is to ensure that public policy, social dynamics, and ethical inquiry evolve in tandem.

Kalim Ahmed is a writer and an open-source researcher who focuses on tech accountability, disinformation, and foreign information manipulation and interference (FIMI).

source: https://frontline.thehindu.com/science-and-technology/ghibli-ai-art-generated-images-moral-panic-copyright/article69411983.ece