It’s no hyperbole to say that everything in the modern world runs on data, whether that’s operationally or in the planning and design stages. Sometimes, that data gets lost, whether through accidents, tragedies, cyberattacks, or human error, and it’s not always able to be recovered. A 2022 survey by backup provider Arcserve found that 76% of businesses that responded had suffered a loss of critical data, and 45% of those said the loss was permanent.

That’s a lot of companies with backup strategies that need improvement, but how about the big names in the industry? Surely, they are better prepared and have safeguards in place. Well, the risk of data loss is just as common within those big players, and most of the time, you never hear about it because they have robust backup strategies in place and distributed data so they can recover from data loss silently. The other times? Well, you don’t always hear about those either, but these incidents of data loss were too large to ignore.

6

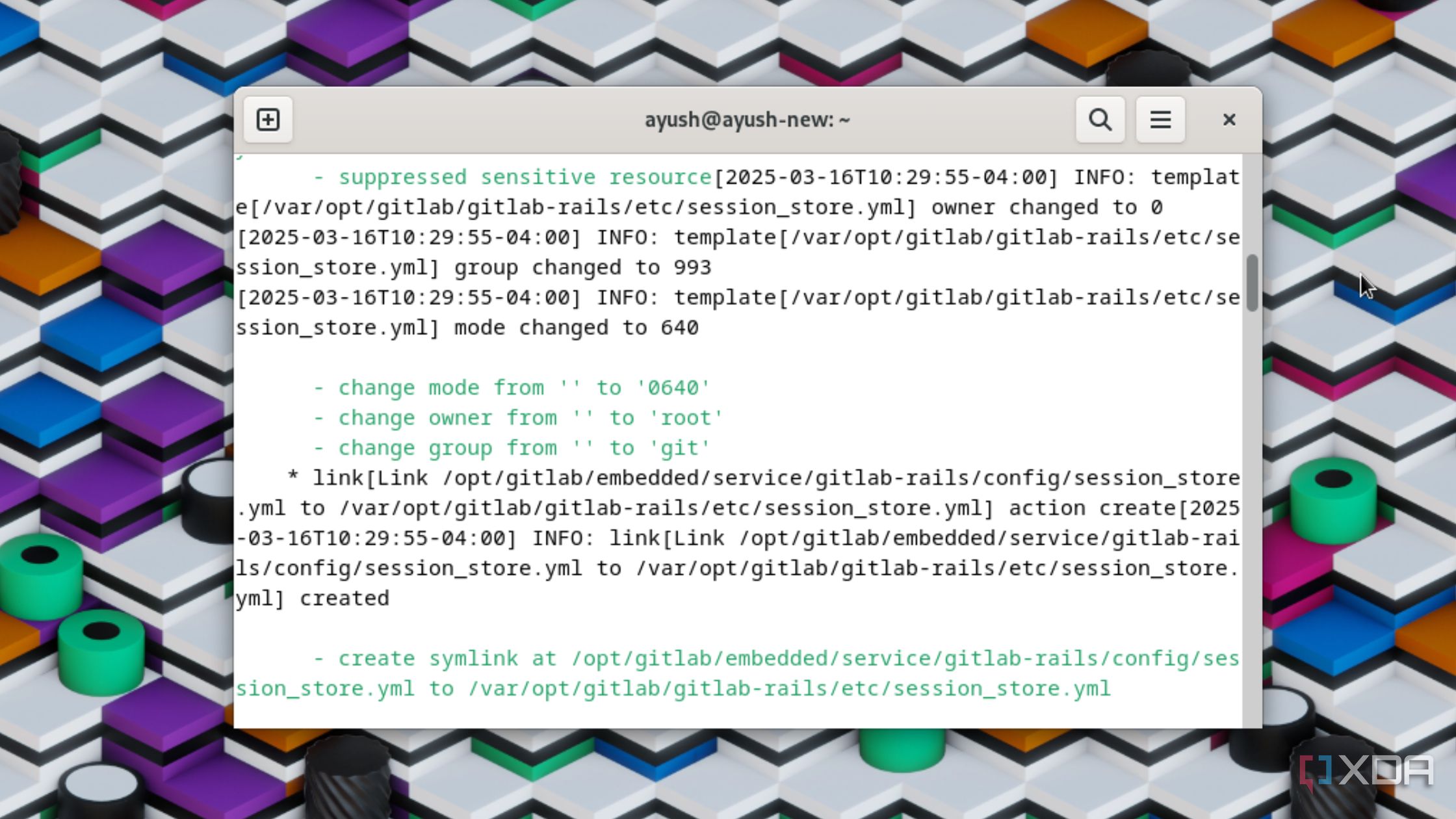

GitLab (2017)

Human error led to 300GB of production data loss with no backups

Over half the world’s business data is in cloud storage, and that includes providers like GitLab. GitLab provides a web-based Git repository with advanced features like a wiki and issue tracking, so companies can keep development, institutional knowledge, and the list of needed bug fixes all in one place. It’s the kind of service that attracts big names like IBM, Sony, and CERN, so it’s well aware of the need for data integrity, backups, and disaster recovery plans.

They often say that no well-laid plan survives contact with the enemy, but that might need revising because it seems they also won’t survive contact with anyone. In 2017, that was put to the test when GitLab lost 300GB of production data during what should have been a routine maintenance task designed to test database scaling techniques. The mistake? An engineer ran the task on the primary database, instead of on the copy they’d created for the task. That led to a cascade of errors, from things silently failing:

- No safeguards against accidental deletion

- Backups had been silently failing for weeks

- Recovery process was too slow

- Secondary database was out of sync so couldn’t be used to replace the primary

So, while the initial mistake was human error, plenty of other issues compounded that into an unrecoverable six hours of new data in the production database. To their credit, GitLab analyzed what went wrong, live-streamed the recovery on YouTube, publicly stated the issues, and put procedures into place for future guardrails. And that one engineer? Was still there afterward, as the CEO told Business Insider that the blame was shared among the entire team.

Related

4 reasons you need to run a Git server on your NAS (even if you’re not a developer)

If you’re looking for your next NAS project, you need to set up a Git server.

5

Samsung (2014)

A data center fire left the company without critical data

Data centers tend to be built with highly effective fire suppression systems for fairly obvious reasons, but they don’t always work, and even if they do, there’s no guarantee they will save every server with data on it. In April 2014, a data center operated by Samsung caught fire after a power generator on an adjacent rooftop caught fire. The incident caused widespread disruptions to various Samsung services, including Samsung payment tools, smart TVs with SmartHub, Samsung phones, Blu-ray players, and plenty more.

As if service disruptions for up to four days weren’t bad enough, when Samsung did manage to move services to other data centers, it was discovered that some of that data was lost for good because the servers had no remote backups. According to Korea’s Financial Supervisory Service (via TechNewsWorld), that data center hosted the main servers for Samsung Life Insurance, Samsung Card, and Samsung Asset Management, so the lack of offsite backups is a regulatory mess.

Related

4 ways to set up an offsite backup system for your home server

Your home server’s data isn’t backed up unless you have an offsite storage solution.

4

Maersk (2017)

NotPetya ransomware took down this shipping giant

Worldwide shipping is heavily reliant on a few mega-companies and methods of transportation, and few are larger than the shipping giant Maersk. In 2017, a cyberweapon known as NotPetya was unleashed by a group of Russian hackers, which raced across the world and appeared to put ransomware onto the target systems. Except it didn’t, because the program was designed to destroy data in the shortest time possible, and by the time the fake ransomware screen popped up on computer screens, the damage had already been done.

Maersk was compromised by a single computer in Odesa, a Ukrainian port on the Black Sea and an important stopping point for international shipping. That’s all it took for NotPetya to go racing through the organization’s network worldwide, taking down port facilities, the entire booking system, and the computer systems that load container ships while not overbalancing them, leading to them capsizing. And it took one computer that saved the day, with a pristine backup in a tiny office in Ghana that had miraculously been knocked offline before the cyberattack. It took weeks of an incredibly complicated recovery effort before everything was back online and running again, and it could have been catastrophic if that one backup hadn’t been found.

Related

Follow these 5 steps to protect your NAS against ransomware and keep your data safe

Keep your data safe from malicious parties.

3

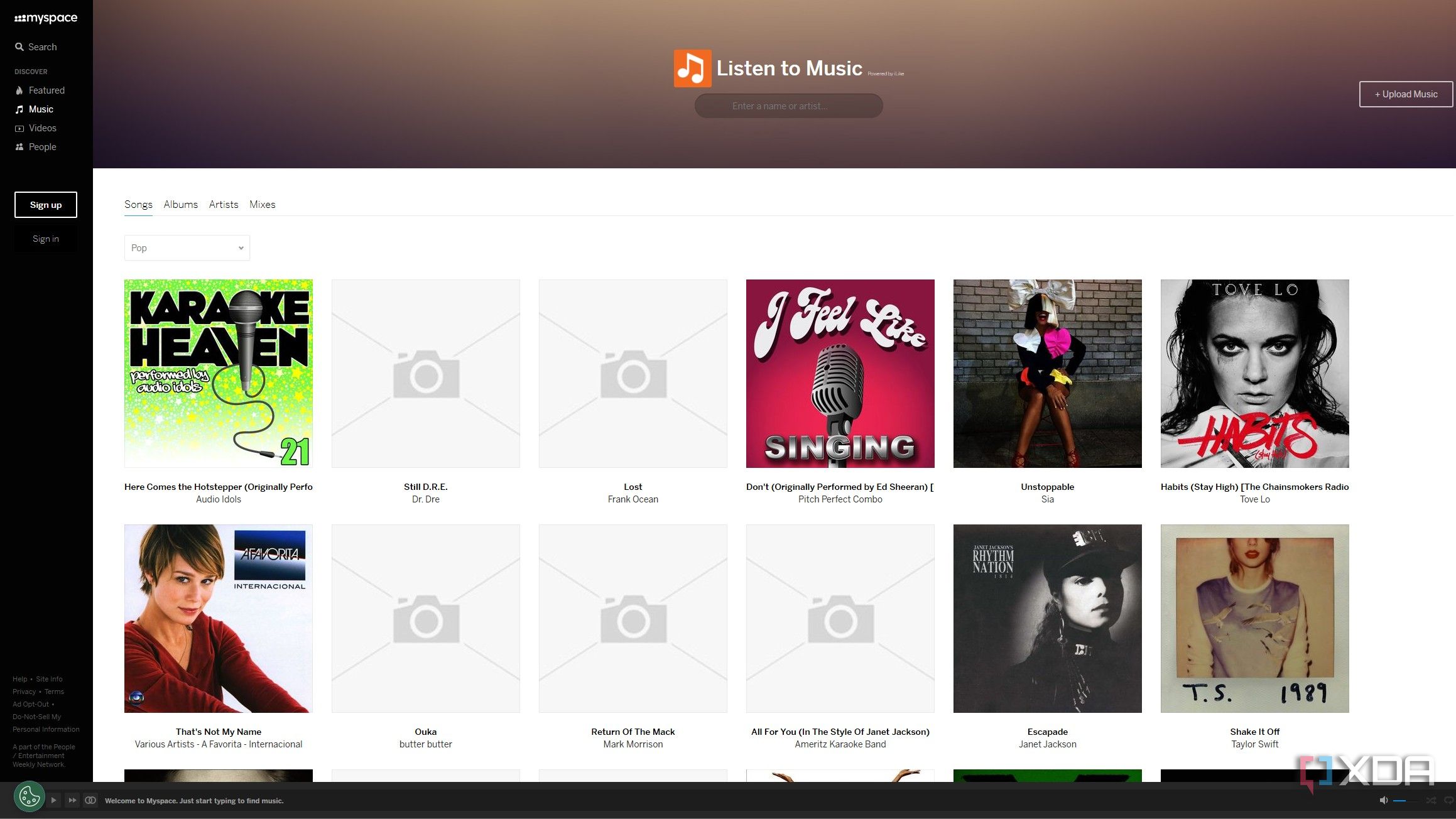

MySpace (2019)

The social media site lost 12 years’ worth of photos, videos, and music uploads

Every facet of our lives gets uploaded to various iterations of social media, but for the longest time, the word for social media was MySpace. The giant of the early 00s was bigger than Google’s homepage in 2006, and no wonder when it was used by everyone from movie stars to up-and-coming bands. Big names like Kate Nash and Arctic Monkeys owe their careers to the music-finding features on MySpace, and so do my own HTML and CSS skills.

Then other social media sites took over, and MySpace faded somewhat but never quite went away. While migrating servers in 2019, MySpace lost a staggering amount of data. While it didn’t lose any accounts, it did lose 12 years of accumulated uploaded music files, photos, and videos, everything from the site’s beginning to some point in 2016. Estimates had the loss at 50 million music files from some 14 million artists. Gone into the ether, just like some of their music careers.

2

Code Spaces (2014)

An attacker got into their AWS and caused chaos

All data loss by a provider is a bad look, but with the right backups in place, those data blips can be recovered from, and reputation can be salvaged intact. Sometimes, the data loss is insurmountable, forcing the company to shut down, as happened to the code hosting service Code Spaces in 2014. This was no simple error though, but a coordinated attack by a hacker or hackers that ended with the mass deletion of most of Code Spaces cloud storage.

It all started with what seemed like a routine DDoS attack—nothing the company hadn’t defended against before. While Code Spaces were distracted, the hacker got access to the Amazon EC2 control panel and left an email address to contact. A ransom demand was the next step in the chain to stop the DDoS attacks. The company decided not to pay and thought they were safe as the attacker didn’t get their private keys.

They missed the multiple backup admin logins that the attacker had created, and when they saw the recovery efforts, they started to “randomly delete artifacts from the panel.” Once the company recovered control again, most of their data, virtual machines, backups, and offsite backups were either corrupted or deleted. Note that this is a company that (almost) did everything right—with backups, offsite backups, and data resiliency plans—and still lost their data through a cybersecurity incident.

Related

5 reasons why it’s still a lot better to store your data locally than in the cloud

Storing your data locally is a lot safer and more reliable than in the cloud. And depending on your needs, it may even be cheaper.

1

Microsoft Sidekick (2009)

T-Mobile and Danger lost all user data in a colossal server fail

XDA was founded because of one single Windows Mobile smartphone, so we have a certain affinity for all phones that Microsoft had a hand in. Before the Redmond giant purchased the company that designed the ill-fated Microsoft Kin range running Windows Phone, Danger Inc. was responsible for a hugely popular slide-out phone, the T-Mobile Sidekick. This revolutionary device was perfect for text messaging or using as a PDA, and it backed up all of its personal data to a cloud service.

The phones couldn’t do much without running that cloud service, which was necessary for most of the data functions. In 2009, some time after Microsoft’s acquisition of Danger Inc., the data servers stopped working, with catastrophic results. Both main and backup servers failed after a contractor was doing upgrade work on the storage area network that the Sidekick services ran on. The end result? 800,000 T-Mobile Sidekick users were without their personal data for some time until Microsoft was able to recover it. Two years later, the company would discontinue the service, leaving Sidekick fans looking for a new device.

Related

6 reasons I self-host Kopia on my NAS to keep all my backups safe

Not backing up your devices at home? Self-host Kopia and give it a try today.

Even professionals sometimes get things wrong and data loss can happen to anyone

Make no mistake about it: data loss can occur at any time, and you’re not immune to it the more knowledge you have. While you could argue that some of these data loss incidents could have been prevented before they happened, the only real protection against data loss is a robust backup strategy that incorporates automation so you don’t have to manually trigger backups, offsite backups to recover from physical damage, testing of those backups, and using immutable backup strategies so that ransomware can’t overwrite your backups. These strategies are just as relevant for the end user as for enterprise providers and don’t take a large amount of technical knowledge to implement.

#times #companies #wished #backups

source: https://www.xda-developers.com/imes-companies-wished-they-had-better-backups/